What is an AI Factory?

AI Factory is a new infrastructure and operational model that allows companies to elevate AI from a point initiative to a system that generates business growth. It goes beyond one-off PoCs or the introduction of tools to individual departments, and provides a foundation for the continuous production of AI by organizing everything from data collection to model development and service provision as a single "factory."

Why is the AI Factory needed now?

With the emergence of generative AI and large-scale language models, AI has evolved from a tool for streamlining certain operations to a strategic theme that will transform businesses and business models themselves. However, if AI is introduced separately at each site, it will face the obstacle of not being able to scale, resulting in cost duplication, lack of governance, and security risks.

AI Factory aims to maximize return on investment and speed up development by consolidating these dispersed AI investments and standardizing them as a company-wide common AI production infrastructure. As a result, even with limited budgets and human resources, more AI services can be developed with high quality.

What results can be produced?

By establishing an AI Factory, companies can continuously and systematically increase the number of AI applications based on their own data. For example, they can deploy AI across multiple business domains, not just automating customer responses, but also demand forecasting, supply chain optimization, quality inspection on production lines, and automating back-office operations.

Furthermore, by incorporating continuous model learning and performance monitoring into the system, it is possible to continue improving the accuracy and value of AI in response to environmental changes and increasing data, rather than just "creating it once and then being done with it." This will not only lead to sales growth and cost reduction, but also to gaining a mid- to long-term competitive advantage through more sophisticated decision-making and the creation of new businesses.

What you can do with AI Factory

1. Mass production of AI models and services

・Input your own data and quickly train, fine-tune, and deploy generative AI, LLM, image recognition models, etc. In the manufacturing industry, you can build a factory digital twin and optimize your production line.

Deploy hundreds or thousands of AI agents to scale out customer response automation and business process personalization.

- Models that would have taken time to develop individually in the past can now be produced in parallel like in a factory, and instantly turned into services across multiple departments and channels.

2. Advancement of industrial and business fields

・AI Factory will evolve AI development and operation from department-specific experiments to a system that can be reused across the entire company. By enabling departments to use common models, data, and workflows, productivity will be improved across the entire organization.

Accelerating model development and inference not only improves the efficiency of existing operations, but also supports the creation of new data services and AI-driven business models.

- By positioning AI from a "tool used on a business-by-business basis" to a "foundation that drives business expansion," we will continuously strengthen corporate competitiveness.

3. Continuous improvement and maximizing ROI

・Automatically collects operational data and continuously improves accuracy through model retraining (data flywheel), ensuring the long-term value of AI that can adapt to environmental changes.

・By deploying AI across the entire company, we will shift investment from decentralized deployment to concentrated investment, maximizing token revenue and operational efficiency.

Through these efforts, AI Factory will accelerate the shift from "trying out AI" to "making money with AI."

AI Factory usage scenarios

AI Factory is used in a variety of industries, including manufacturing, finance, and healthcare, and the key is the continuous operation of AI that makes use of your company's own data.

Manufacturing/Logistics DX

・ Data from cameras and sensors within the factory is collected in real time in the AI Factory, automating predictive maintenance, visual inspection, and robot control. By creating a digital twin of the entire production line, downtime is reduced and operation rates are significantly improved.

- By centrally managing data from multiple factories and unifying quality standards and implementing optimization rules across the company, we have achieved standardization and efficiency in the manufacturing process.

Finance and Services

・Mass deployment of personalized AI agents based on customer transaction data and inquiry history enables 24-hour automated responses and risk prediction, reducing the workload of staff and improving customer satisfaction.

In call center operations, AI-generated sentiment analysis and automatic summarization of conversations are performed, stabilizing and speeding up the quality of responses from operators.

Healthcare and Drug Discovery

・Continually learning from medical images and patient data, diagnostic support AI is utilized on-site, improving test accuracy and reducing the burden on doctors, thereby supporting rapid decision-making.

In the drug discovery process, molecular structure data and clinical trial results are analyzed by AI Factory to speed up compound screening and drug efficacy prediction, shortening the time it takes to develop new drugs and improving the success rate.

What these scenarios have in common is that the AI Factory goes through a cycle of "data collection → model production → production operation → continuous improvement," leading to business transformation from one-off AI.

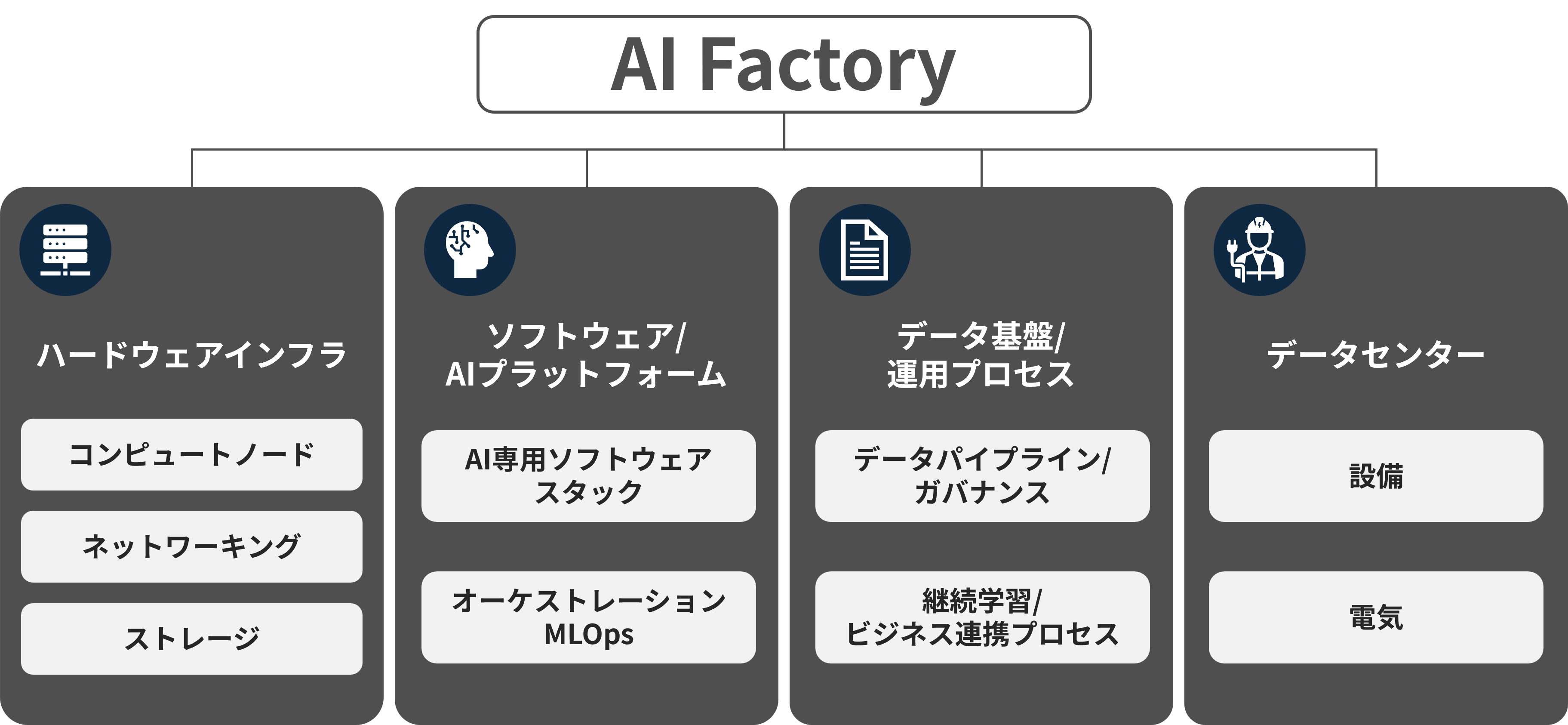

Components of the AI Factory

The AI Factory is primarily composed of a data center, compute nodes, networking, storage, the data to be used, a software stack to manage these, and software to be used in the application layer.

Hardware Infrastructure

・Compute node

- A group of GPU servers centered around NVIDIA Blackwell generation GPUs (B200/GB200) and high-density compute nodes that combine Grace CPUs will serve as the calculation engine that supports generative AI and large-scale models.

·networking

- NVLink/NVSwitch, which connects GPUs, and Spectrum-X, which acts as a data center network, enable high-speed, low-latency communication and ensure scalability between nodes.

·storage

・Large-capacity, high-throughput storage is used to optimize the input and output of learning data. By combining this with a highly efficient cooling system, including liquid cooling,Supports scalability and stable operation of the entire AI clusterdo.

Software & AI Platform

・AI dedicated software stack

- A series of frameworks based on CUDA, including TensorRT, which accelerates learning and inference, NVIDIA AI Enterprise for generative AI, NIM Microservices, and NeMo, support the entire AI workload.

This will improve the efficiency of training, fine-tuning, and deployment of LLM and multimodal models, enabling companies to quickly build AI services tailored to their use cases.

Orchestration and MLOps

- The MLOps function, which centrally manages everything from data preprocessing to model learning, evaluation, deployment, and monitoring as a pipeline, standardizes and automates the AI lifecycle.

Governance functions such as security, access control, and audit logs are also built in, and the technology is being deployed in AI Factory reference designs for regulated industries and governments.

Data infrastructure and operational processes

Data Pipeline and Governance

- The data infrastructure that collects data from various business systems, IoT, and sensors and performs quality control, cataloging, and authority management functions as the "fuel tank" of the AI Factory.

- Thorough data lifecycle management and governance will enable safe handling of training and inference data while meeting compliance requirements.

・Continuous learning and business collaboration process

- The "Data Flywheel" collects logs and feedback from AI services in production and reflects this in model retraining and tuning, providing a mechanism for continuously increasing the value of AI.

- By establishing an operational process that links management and business KPIs with model performance indicators, it will be possible to quantitatively evaluate "how much the AI Factory is contributing to business results."

data center

・Equipment

A large-scale data center will serve as the physical foundation for the AI Factory, integrating all elements. Cooling systems, physical security, and server rack layout will be optimized to ensure stable operation of AI clusters with thousands of GPUs.

- High-density deployment and easy maintenance are ensured through hyperscaler designs and enterprise-validated data center references.

· electricity

- Equipped with a large-capacity power supply system to cover the enormous power consumption of the high-performance GPU cluster. Optimizing operational costs through the use of renewable energy and efficient power management.

A combination of redundant power supplies and UPS guarantees availability of over 99.999%, ensuring continuous operation of AI workloads.

AI Factory Architecture

The AI Factory reference architecture is designed based on a verified configuration, making it easy for companies to implement gradual expansion and redundant configurations. Taking into account each customer's unique business requirements, IT maturity, and expertise, AI Factory can be implemented using a variety of approaches optimized for their infrastructure strategy, from on-premise to cloud. Three implementation types are available: NVIDIA's all-in-one solution, "DGX SuperPOD," "Enterprise RA" developed in collaboration with major OEM partners, and "NCP (NVIDIA Certified Partner)," which is built on major cloud services.

DGX SuperPOD

For businesses looking for performance and scalability for large-scale model development, this all-in-one on-premise solution from NVIDIA integrates full-stack software on a DGX SuperPOD and provides direct support.

- AI clusters with thousands of GPUs can be built in a short period of time, providing the highest performance learning and inference environment.

Enterprise RA

For companies that want to leverage existing relationships with IT partners (Dell, Supermicro, etc.), deploy an AI Factory optimized for customer requirements with a validated design based on an OEM partner ecosystem.

- Ideal for enterprise deployments that utilize in-house operational know-how while ensuring gradual expansion and high availability.

NCP(NVIDIA-Certified Partner)

For companies pursuing a cloud-first strategy, this solution leverages certified partner solutions hosted on major cloud computing platforms (AWS, Azure, Google Cloud) to implement an AI Factory in a cloud-native manner.

- Seamless transition from prototype to production scale is possible, enabling flexible resource expansion while minimizing operational burden.

Macnica 's AI Factory Construction Support

GPU cluster environment construction support

Macnica offers GPU cluster environment construction support for customers who want to build an AI factory. Our GPU cluster environment construction support ranges from defining system architecture requirements specialized for GPU clusters to integration and post-implementation support.

For more details, please see the following page.