This article explains how digital audio is recorded and where information is lost, from the perspectives of sampling and quantization.

What happens when a continuous analog sound is represented only by "points (sampling)" and "steps (quantization)"? Through observing actual sound changes and waveform analysis, this aims to understand the mechanism of sound (information) loss and the reasons behind it.

What is the mechanism by which information is lost in digital audio?

Analog audio is information that changes continuously in both the time and amplitude directions. However, digital devices can only handle discrete numerical data. Therefore, when converting analog audio to digital, a two-stage process called sampling and quantization is performed, and some information is inevitably lost during these two processes.

■ Sampling: Cutting out information in the time direction at equal intervals.

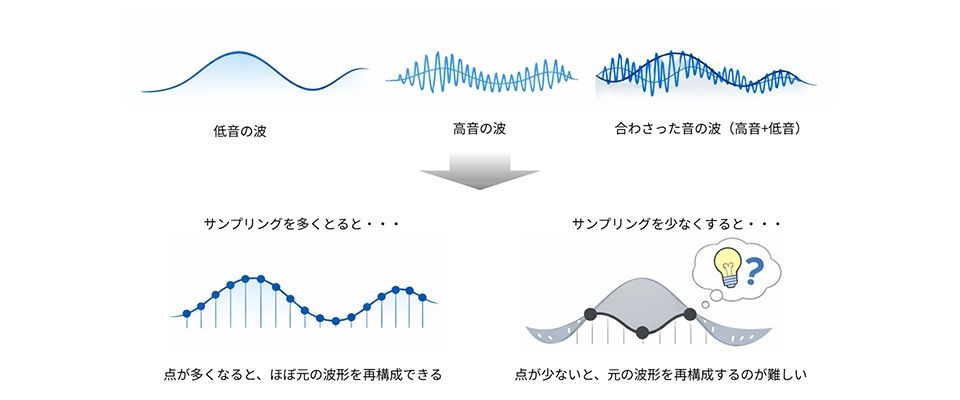

The analog waveform is recorded as "points" at regular intervals. In this process, the subtle changes in the wave between points are not recorded, and some of the information in the time direction is discarded.

■ Quantization: Rounding off small differences in amplitude into steps.

The amplitude (sound level) of the recorded points is rounded to a predetermined number of steps and saved. The coarser the steps, the less smooth the original waveform is.

Sampling: Where is information lost in the time domain?

Analog audio is a wave (signal) that changes continuously in the time direction. However, in digitalization, the shape of the wave needs to be recorded as "points" at regular time intervals (sampling). This process of "sampling time" means that the subtle changes between points are not recorded, and some information in the time direction is lost.

What sounds become inaudible at sampling frequencies of 44.1kHz and 8kHz?

The sounds of voices and musical instruments are made up of a mixture of components that fluctuate slowly (low pitch) and components that fluctuate rapidly (high pitch). The higher the pitch, the finer the wave fluctuations, so if the sound is not recorded at short intervals, those fluctuations will be lost. The sampling frequency is a value that indicates how many times the sound is recorded per second.

example:

44.1kHz → Records 44,100 times per second

8kHz → Records 8,000 times per second

When the spacing between points widens, the fine fluctuations (i.e., high-frequency components) between them are "passed over before they can be seen" and are therefore not recorded. As a result, the following changes occur at 8kHz.

- Consonants like S and H become weaker.

- The spaciousness and sense of airiness in the high frequencies disappear.

- The overall impression is one of "muffled" or "somber".

At 8kHz, "fine fluctuations" = "high-frequency sounds" are not recorded as points from the beginning. When viewed as a waveform, they appear as "different waves."

Why do high frequencies drop off? What is the Nyquist frequency?

In sampling, sound waves are recorded as points at regular intervals. Parts of the wave that fluctuate more finely than these point intervals (i.e., high-pitched sounds) are hidden between the points and not recorded, as shown in the diagram above. To determine that a wave has fluctuated once, two changes are required: an upward movement and a downward movement. Therefore, at least two recordings are necessary to capture one period.

Therefore,

44.1kHz is approximately 22kHz

・8kHz is approximately 4kHz

This means that only fine fluctuations up to half the sampling frequency can be recorded. This is the limit called the "Nyquist frequency," and it's why high frequencies drop off first. This also means that to accurately reproduce the original waveform, sampling at more than twice the frequency is necessary.

Quantization: Where is information in the amplitude direction lost?

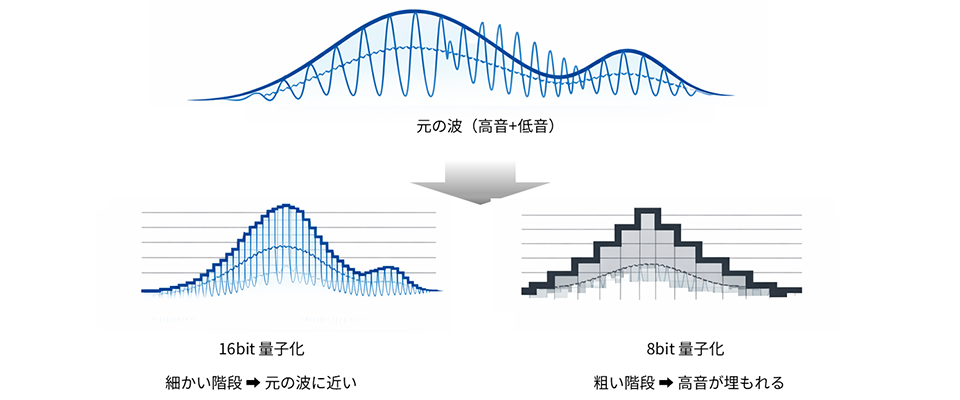

In digitalization, the "wave height (amplitude)" of sound is rounded up into predetermined steps and recorded. This process is called "quantization." The finer the steps, the more precisely the wave height can be represented. Conversely, if the steps are coarse, similar differences in height are all treated the same, resulting in a jagged, "staircase-like" wave.

Quantization determines the level of detail in the "vertical direction (height)" of a signal, whereas sampling determines the level of detail in the "horizontal direction (time axis)." This mechanism is the root cause of phenomena such as "8-bit audio sounding grainy" and "small sounds disappearing."

Reasons why 8-bit sound becomes grainy.

The difference between 8-bit and 16-bit is the number of levels at which wave height can be represented.

16-bit → 65,536 steps

8-bit → 256 levels

The coarser the gradation (8 bits), the larger the rounding error in height, resulting in a jagged wave.

This is,

- The sound is rough.

- Small vibrations (high-pitched sounds) disappear.

- The top is rounded

This explains why it has that "8-bit-specific sound quality."

Quantization error as seen in waveforms: The mechanism behind the jagged appearance

The wave in the upper center of the diagram below is the "original wave," a mixture of low (slow) and high (fine fluctuations). With 16-bit quantization (lower left diagram), the steps are finer, so even if the height is rounded off, it can be reproduced in a form very close to the original wave. However, with 8-bit quantization (lower right diagram), the steps are coarser, so fine differences in height are absorbed within the steps.

the result,

- The waves become "staircase-like and jagged".

- Fine vibrations (high frequencies) are buried and disappear within the gradation.

- It looks like a "round, separate wave" with only the low frequencies remaining.

The diagram below visually illustrates this point.

Relationship between dynamic range and noise floor

As the number of quantization bits increases, the number of levels that can represent the amplitude of a wave increases, allowing even small sounds (weak signals) to be recorded as levels. On the other hand, with 8 bits, which have coarser levels, the amplitude of weak sounds can get lost in the levels and be treated as "0". This boundary below which sounds that are too small cannot be recorded is called the noise floor.

- Sounds below the noise floor → disappear (are buried in the noise level)

- Sounds above the noise floor → are expressed in stages.

As a result,

・16-bit → Low noise floor

→ It can express even quieter sounds.

→ Wide dynamic range (the range of sounds that can be expressed)

8-bit → High noise floor

→ Small sounds are easily silenced

→ Narrow dynamic range

Furthermore, the coarseness of the steps themselves manifests as quantization noise (a crackling background noise).

What changes when we can "explain the cause" from waveforms?

Understanding sampling and quantization allows you to explain changes in sound not just "intuitively," but by identifying which parts of the waveform are being lost. This means you can make decisions about audio design and evaluation based on "evidence" rather than "intuition."

Here, we'll briefly explain how this understanding can be beneficial in actual development work.

■ Makes the evaluation criteria for microphones and ADCs easier to understand.

Understanding sampling and quantization will allow you to explain "why sounds are the way they are" by looking at waveforms.

example:

- The drop in high frequencies is due to a problem with the "sampling interval".

- The reason why quiet sounds get buried is due to the "bit depth increment".

This way, you'll naturally understand which settings are affecting which parts of the sound. This alone makes design decisions much easier.

■ Moving beyond "just a hunch" approach to noise reduction

Developing the ability to interpret waveforms allows you to move beyond vague descriptions like "noisy" or "gritty,"

Is that due to the "missing small vibrations"?

Is it due to the "coarseness of the grading"?

This helps you pinpoint the cause of the problem. As a result, it becomes easier to understand what needs to be adjusted next.

■ Makes it easier to understand the meaning of preprocessing for speech recognition and compression.

Speech recognition and compression always involve processes that "eliminate unnecessary fluctuations" and "retain only the necessary parts." Understanding sampling and quantization allows you to intuitively understand "why that process is necessary," making it easier to grasp the intentions behind the settings and tuning.

Summary: There is always a reason for sound degradation. Understanding that reason is the first step.

Digital audio degradation doesn't just happen "randomly." Sampling removes subtle fluctuations in the time domain, while quantization removes subtle differences in pitch. In other words, any change in sound always has a cause: "some part of the waveform is being lost."

Through the content of this article,

Reasons why high notes drop

Reasons why the sound is rough

Reasons why small sounds disappear

Reasons why it sounds like a different wave

You should now understand that all of this is connected to the "mechanism of waveforms and digitization." With this understanding alone, your daily evaluations, designs, and troubleshooting will be based on evidence rather than intuition. Sampling and quantization are the "entry points" that you should grasp first when working with digital audio, but if you understand these, it will be much easier to understand topics you will deal with later, such as "noise reduction," "filtering," "speech recognition preprocessing," and "codecs and compression methods."

There is always a reason why sounds change and sound differently. Being able to explain those reasons using waveforms is the first step to becoming a technician who can "work with sound."

Related Information

After going through the "sampling and quantization" process explained so far, an audio development platform is useful when you want to "work with" the digitized sound. DSP Concepts' audio development platform, "Audio Weaver," makes it easy to "work with" sound, such as correcting and enhancing it, through a GUI-based interface.

Inquiry

If you have any questions about this article, please contact us using the form below.

DSP Concepts Manufacturer Information Top

DSP Concepts Manufacturer Information If you would like to return to the top page, please click below.