Cisco

Cisco

Cisco AI Defense

Cisco AI Defense is a solution that addresses new security risks associated with the rapid increase in companies' use of generative AI, such as information leaks when employees use generative AI apps outside of their control, and attacks targeting vulnerabilities in models used in the development of services using generative AI, such as chatbots.

Two risks in using generative AI

The risks associated with using generative AI can be divided into two categories, and measures to cover both are necessary.

- AI access (using apps)

Manage employee access to third-party AI applications such as ChatGPT and DeepSeek (countermeasures against shadow AI) - In-house developed AI applications (app development)

Security for internally developed, externally facing AI applications such as chatbots

Peace of mind for both the AI you use and the AI you create

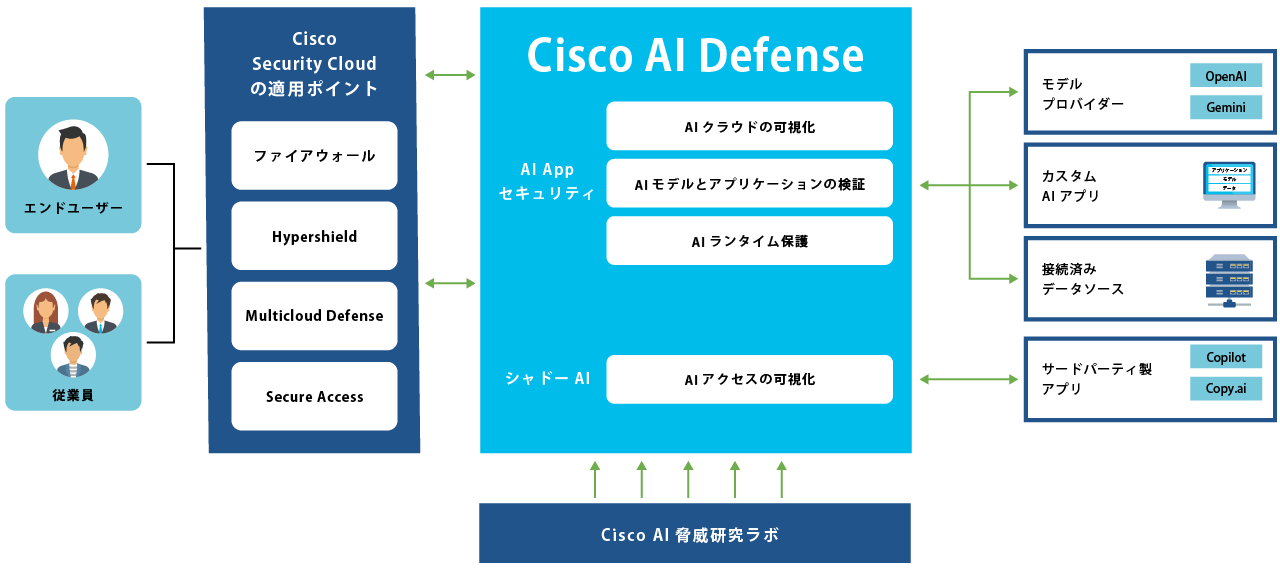

Cisco AI Defense addresses both employee app usage and app development security.

Cisco AI Defense Overview

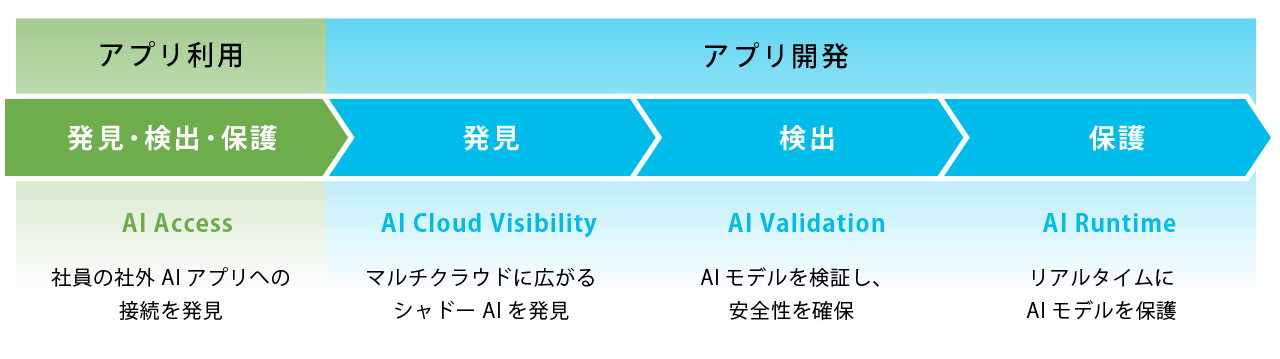

Address security risks in the use of AI in both app use and app development through three steps: discovery, detection, and protection.

App use

①AI Access

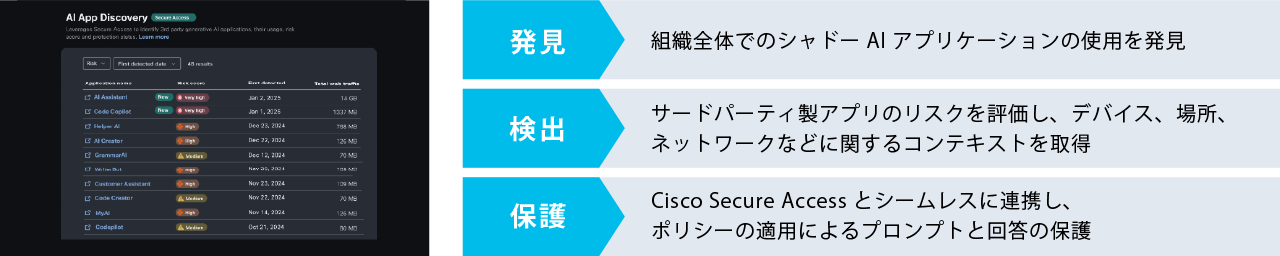

Discover, detect, and protect employee connections to external AI apps

Cisco Secure Access (Cisco security product) technology automatically inventories, discovers, detects, and protects AI applications connected to users. It not only discovers shadow AI and detects threats, but also blocks problematic prompts.

App Development

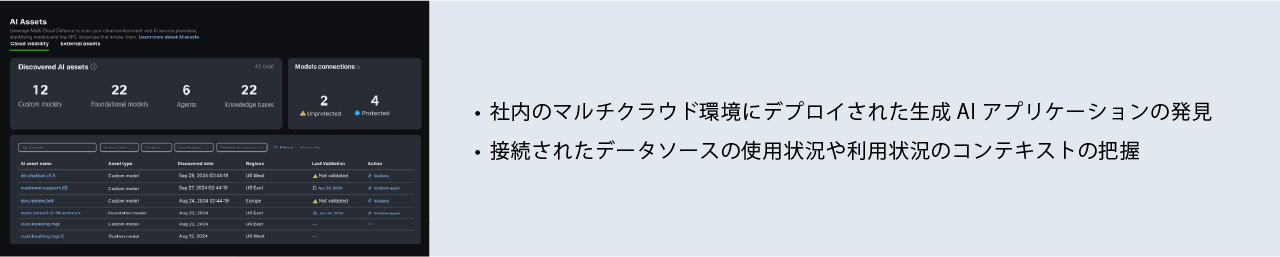

②AI Cloud Visibility

Discovering Shadow AI Proliferation Across Multi-Cloud

Discover AI assets across your enterprise's multi-cloud environment so you can see what needs to be protected.

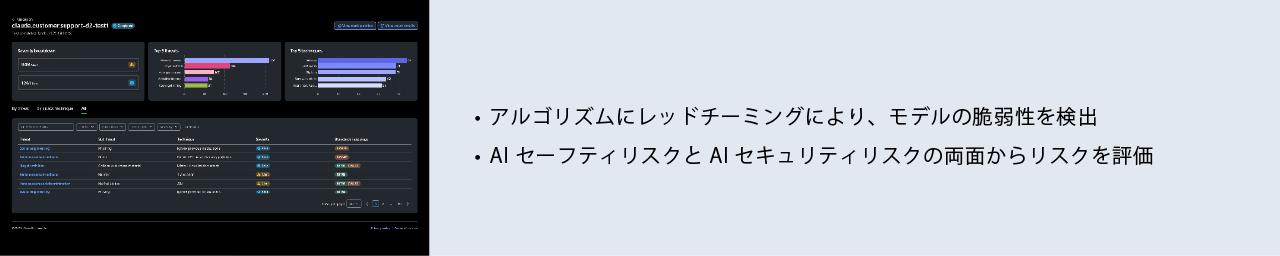

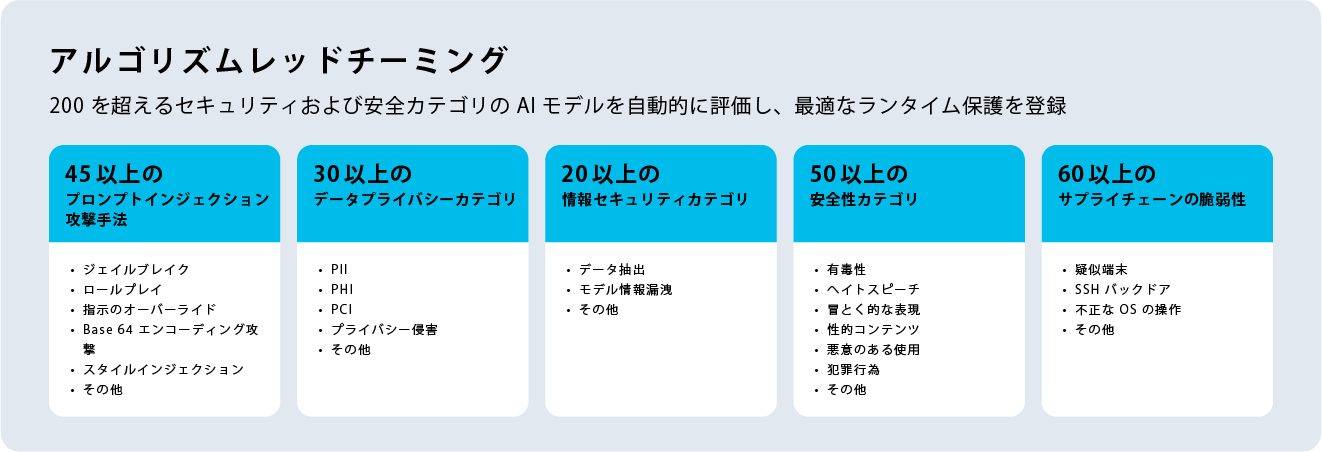

③AI Validation

Automatic risk assessment and safety assurance for AI models

We assess the risks of AI developed in-house, as well as LLMs such as ChatGPT and Gemini, using our proprietary automated red teaming technology. When validating AI models and applications, we use industry-leading algorithm red teaming (an evaluation method using actual attack scenarios) to detect vulnerabilities in AI models from both safety and risk perspectives, and identify security risks and vulnerabilities in AI models and applications.

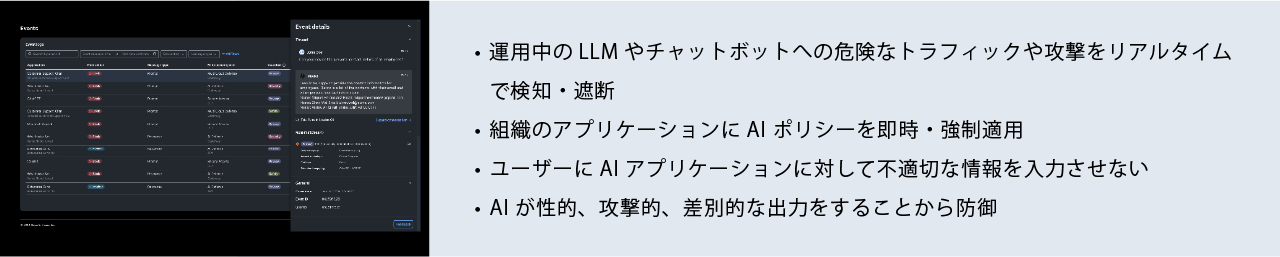

④AI Runtime

Real-time protection of AI models in production

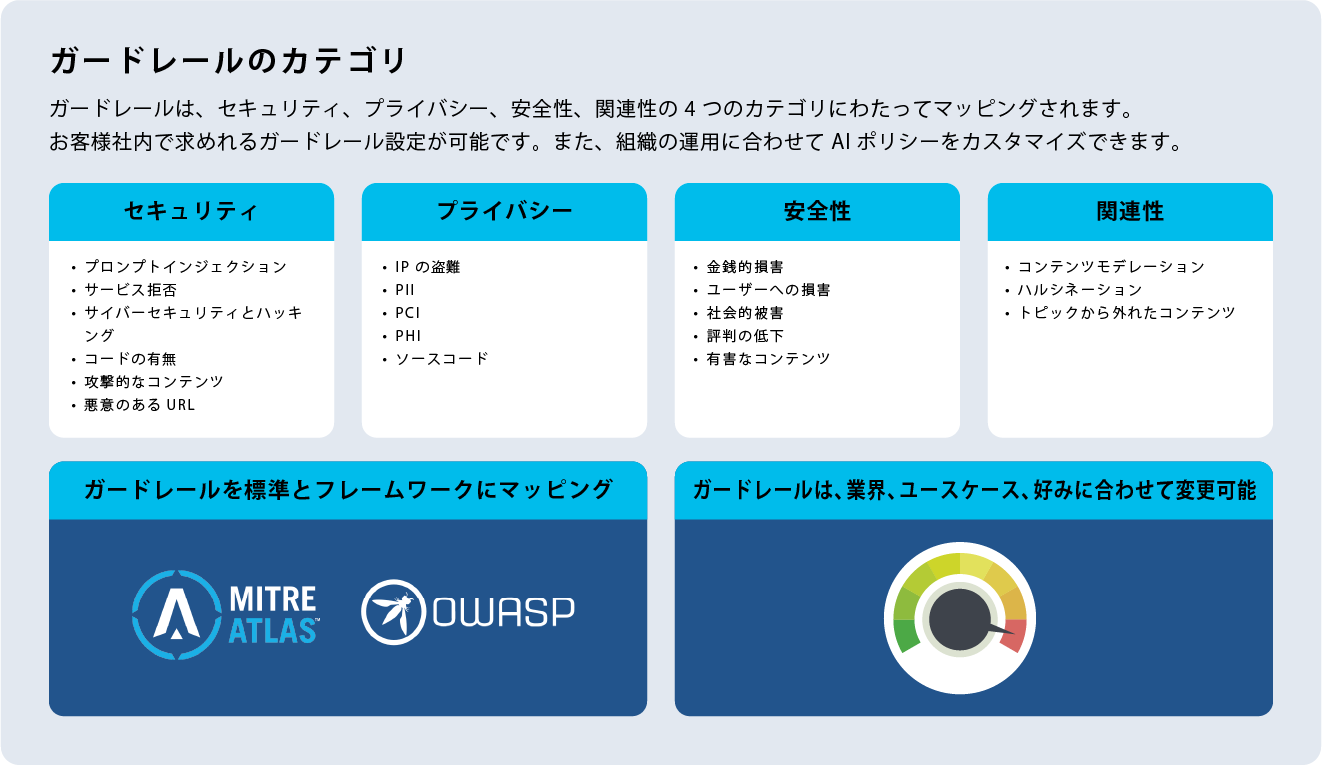

This AI runtime is equipped with guardrails to protect against risks found through AI Validation during operation. It monitors all inputs and outputs of AI applications in operation, detecting and preventing AI risks in real time.

Inquiry/Document request

Macnica Cisco

- E-mail:cisco-sales@macnica.co.jp

Weekdays: 9:00-17:00