This article is recommended for those who

Data scientists, machine learning engineers, anyone studying artificial intelligence

Introduction

This column is a participation report of NeurIPS 2019.

Members of Macnica 's AI Research & Innovation Hub (ARIH) participated in NeurIPS 2019, which was held in Vancouver, Canada from December 8th to 14th, 2019.

NeurIPS 2019 was held in Canada for the second year in a row. It was a huge crowd.

In the first place, the tickets necessary for participation were drawn by lottery, and it was a great success as only the winners could participate.

Regarding such NeurIPS2019, in the first report

・What is the position of NeurIPS among many AI societies?

・What are the AI technology trends seen from the submitted papers?

From that point of view, I would like to deliver the latest information on AI that I got from participating in the conference!

Positioning of NeurIPS

The table below summarizes the characteristics of AI-related academic societies in 2020.

|

name |

detail |

place |

season |

|

AI in general |

|||

|

IJCAIMore |

The world's top conference not only for machine learning but AI in general |

Yokohama |

July |

|

AAAI |

Conference equivalent to IJCAI |

New York |

February |

|

JSAI |

A Japanese conference called the National Conference of the Japanese Society for Artificial Intelligence |

Kumamoto |

June |

|

Statistical Machine Learning/Deep Learning |

|||

|

NeurIPS |

Top machine learning conferences Formerly known as NIPS |

Canada |

December |

|

ICML |

Top conference alongside NeurIPS |

Austria |

July |

|

IBIS |

Japan's largest machine learning workshop |

TBDMore |

TBDMore |

|

computer vision |

|||

|

CVPR |

top conferences in computer vision |

America |

June |

|

ICCV |

Conference on par with CVPR (every other year) |

TBDMore |

TBDMore |

* Surveyed in January 2020. All conference dates are for 2020.

NeurIPS 2019 is a top machine learning conference among many academic societies.

With approximately 13,000 visitors and 9,185 papers submitted, it is extremely popular compared to major international conferences and can be said to be one of the most authoritative conferences.

I would like to look back on this year's AI technology trends as seen from NeurIPS 2019 based on the titles and presentations of the submitted papers!

NeurIPS 2019 AI Technology Trends Seen from Submitted Papers

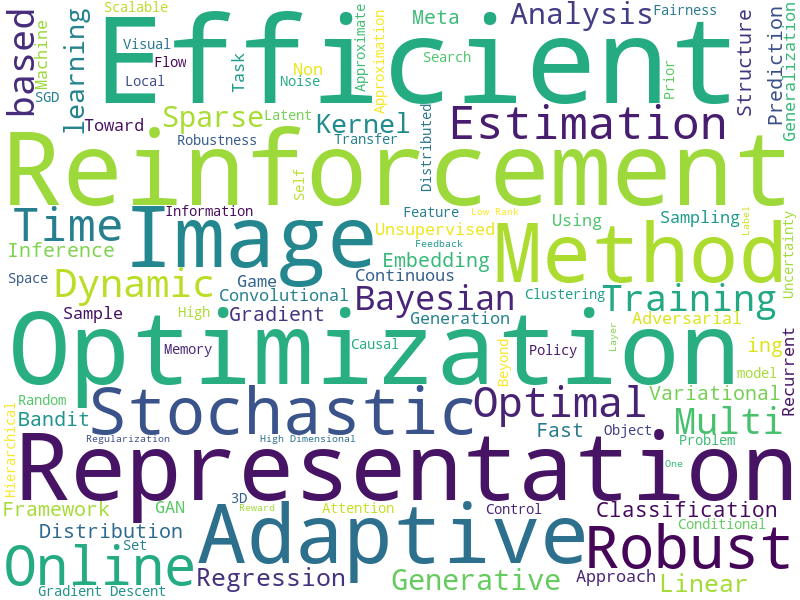

This image aggregates the titles of 1,428 papers accepted at NeurIPS 2019, and displays the titles with many words in large sizes and those with few words in small sizes, in order to understand technology trends. (Coloring has no meaning.)

* Created from https://nips.cc/Conferences/2019/Schedule?type=Poster.

The most popular keywords in 2019 were 'Optimization', 'Reinforcement', 'Efficient' and 'Bayesian'.

Here, I will explain the outline so that anyone can understand without touching on theory and formulas.

■ Optimization

When solving problems with AI, there are well-known approaches such as image processing, statistics, and deep learning, but there are many problems that can be solved by approaches such as optimization.

An optimization problem is a problem of finding the best solution under limited conditions.

Optimization is a problem that is often taken up as route optimization and placement optimization, but for example, the following are specific examples of route optimization.

[Limited conditions]: On the way from the food production factory to the convenience store

[Pursue the best]: What kind of transportation route minimizes the transportation cost?

A specific example of a placement optimization problem is as follows.

[Limited conditions]: On the production line of the component manufacturing factory

[Pursue the best]: What kind of arrangement of people and machine tools reduces the defective product rate

It is easy to talk about the above problems in writing, but when actually working on them, it is difficult and challenging to put "limited conditions" and "best" into formulas and programs.

■ Reinforcement

Machine learning methods used in business are generally ``supervised learning'' and ``unsupervised learning'', but in the field of research, ``reinforcement learning'' is also attracting attention.

Supervised learning is the most widely used machine learning method, which learns the relationship between inputs and outputs.

For example, in supervised learning, when an image of a dog is input, it learns to output the label "dog".

Also, unsupervised learning learns the structure of the data itself.

For example, when anomaly detection is performed using data obtained from a factory, there is often no anomaly data, so it is possible to learn the structure of normal data through unsupervised learning and detect anomalies.

Reinforcement learning is a method of learning what kind of behavior will give the greatest reward in a given environment.

For example, Alpha Go, Google's Deep Mind, used reinforcement learning.

I think that the method itself is more difficult to understand than the other two, but it has been actively researched, and at one time there was a lot of noise that "true AI can be made from reinforcement learning."

However, there are challenges in deploying reinforcement learning in business.

It simply means that "real world data is not large enough to be applied to reinforcement learning".

Reinforcement learning is possible when a large amount of data can be produced through simulations such as games, but in reality there are few cases where such a large amount of data can be collected, so I have the impression that this is a field that is expected to grow in the future.

■ Efficient

The computational process of machine learning takes a long time.

For example, when performing classification on an image-related task, it is commonplace that it takes two weeks to create a single smart model.

This period itself is also a problem, but it will be two weeks before we can properly test the performance of this model.

You can see the progress during the two weeks, but you cannot do any work such as coding or tuning during that time.

With normal software development, you can check the output as you build it, but AI development has its drawbacks in that regard.

Therefore, it is important to make learning “efficient”.

For example, by slightly changing the formula used in the learning algorithm, the learning that took two weeks may be halved.

This is what we call efficiency.

Also, efficiency is important not only in the learning phase but also in the inference phase.

For example, if you create an AI model that detects a person from a camera for autonomous driving, but it takes 10 seconds for the AI model to detect a person from one image, it will be of no practical use. .

Therefore, it can be said that efficiency research is a field that is closely related to business applications.

■ Bayesian

Bayesian statistics is a type of statistics based on "Bayes' theorem" proposed by British mathematician Thomas Bayes in the mid-1700s.

In Japanese universities, inferential statistics are usually taught mainly, and the term "Bayesian statistics" is not widely used, but it is very effective in applying machine learning to the real world.

Here, we will introduce the strengths and practical applications of Bayesian statistics.

推計統計と比較したときにベイズ統計の強みは下記二点にあります。

1. It can be flexibly combined with domain knowledge, and predictions can be made with less data (subjective probability)

2. Easy to create a mechanism that automatically updates the prediction model when data comes in (Bayesian update)

*Domain knowledge is the knowledge that humans possess.

Taking advantage of these strengths, tools such as spam filtering functions using Bayesian filters and medical diagnosis support using Bayesian networks have been developed as practical applications.

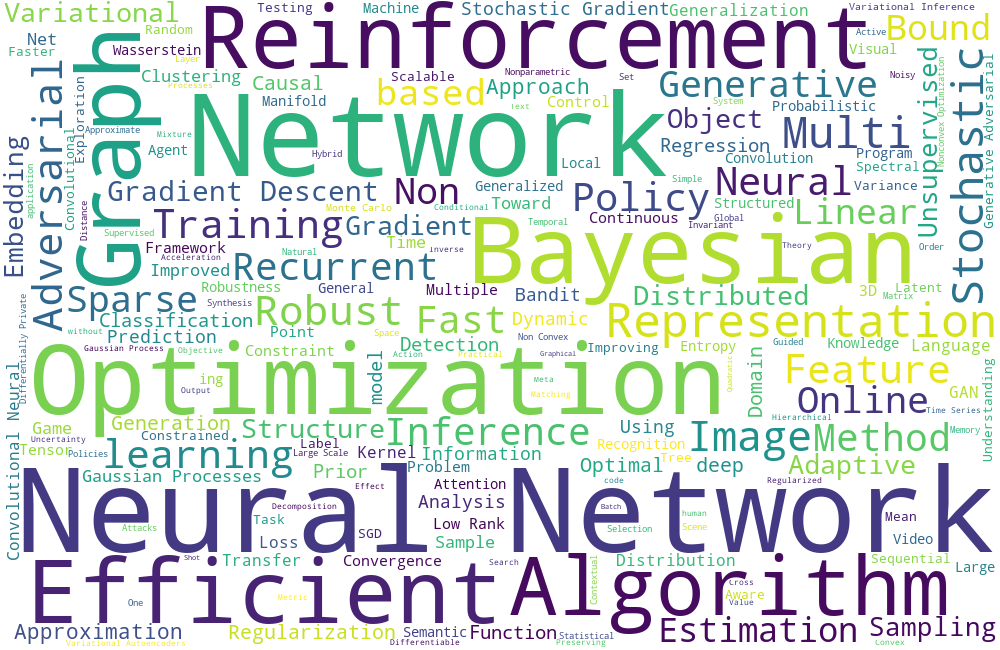

Finally, I would like to talk about "graph theory," which was not covered much this time, but was attracting attention in 2018 due to its power.

*The titles of 1,011 papers selected for NeurIPS 2018 were aggregated, and those with many words included in the titles were displayed larger, and those with fewer words were displayed smaller.

Self-made from https://nips.cc/Conferences/2018/Schedule?type=Poster.

■ Graph

In a nutshell, a graph is a structure that represents a state in which something is connected to something else.

For example, neural networks and communication networks are also graphs.

Even images and text can be used as graphs as various data structures, and many attempts were made last year to apply these to neural networks such as CNN.

Graph theory is applied to the recommendation function that appears in Facebook and Twitter's "Friends?" and analysis methods in the field of biology.

Summary

This time, NeurIPS2019 is something that anyone can understand, so I first told you about it from the following two perspectives.

・What is the position of NeurIPS among many AI societies?

・What are the AI technology trends seen from the submitted papers?

In the second installment, I will tell you about the trend in a little more depth. looking forward to! !

・AI technology trends seen from actual sessions

・ 5 AI Keywords that ARIH is interested in